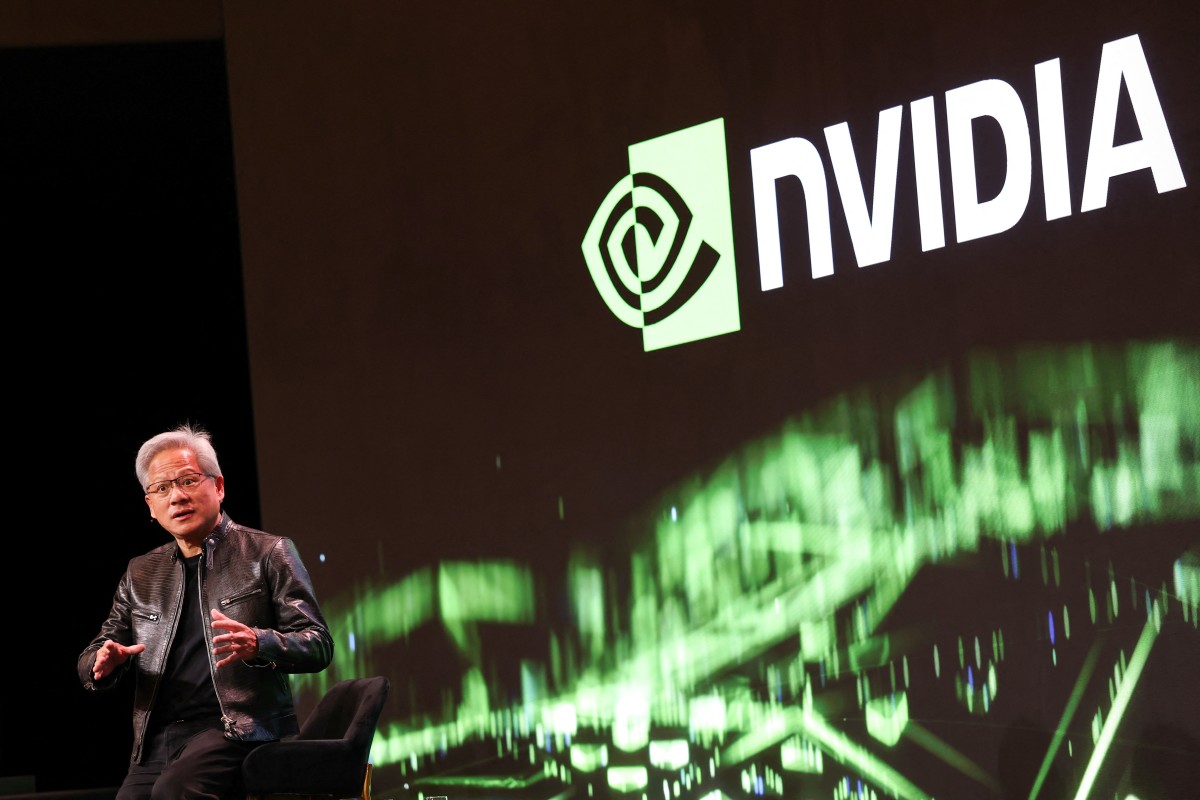

Bank of America drops a surprising Nvidia warning before earnings

Nvidia (NVDA) is now firmly in earnings mode. The semiconductor industry giant will report earnings on Wednesday, Feb. 25, after the market close. The earnings report, a crucial market for the chip giant, has garnered significant input from various analysts and stakeholders.

But a fresh analyst take just on the eve of the earnings report is garnering significant attention.

The note is focusing on the overlooked part of the stack. Per the bombshell analyst note, that part of the stake could, by inference, widen the conversation about who wins.

Ultimately, Nvidia’s looming earnings report will take over the AI trade again. But the Feb. 23 analyst note tries to make the case that the markets are only factoring in part of the picture.

The shift can potentially reshape what investors think about Nvidia, Advanced Micro Devices (AMD), Arm Holdings (ARM), and Intel (INTC) as we head into the next part of the AI buildout.

That does not mean GPUs will not matter in the future. Instead, it means the market might not fully understand what happens when AI workloads go from being trained to being deployed, where system control, scheduling, memory orchestration, and latency become more important.

Nvidia earnings are the main event, but the setup is getting more complicated

Nvidia’s looming earnings are hard to ignore. They are the main headline risk event for semiconductor investors.

The usual questions will be important: How long will demand last, how much will hyperscalers spend, how will products change, and how should investors think about the speed of AI infrastructure spending from here?

But in reading Arya’s note, I feel he makes a nuanced argument. Nvidia’s largest upside will increasingly come from owning more of the system, not just the accelerator.

Related: Mark Cuban’s bombshell AI warning is a reality check for big tech

That matters, primarily due to valuation debates centering around Nvidia. The argument focuses on whether GPU demand can keep compounding at the same rate.

If inference leads to more capital outlay on compute, memory, networking, and control layers, Nvidia’s earnings story could become more stable if management shows it is getting more of that stack.

The hidden shift is inference and that changes what hardware gets paid

The note’s core argument is simple and straightforward. AI training and AI inference represent divergent workloads. As a result, they reward different hardware mixes.

Arya said that “AI inference is control-heavy and needs more CPUs,” in particular when it comes to orchestration, scheduling, memory management, and processing output one token at a time. So, CPUs are still important for keeping the system fast and responsive, but GPUs do most of the work.

Related: Nvidia CEO shocks AI community over the one thing he didn’t do

Bank of America now forecasts server CPU demand to increase materially as that shift plays out, with the note looking to “server CPU TAM reaching ~$60bn by CY30E from just $27bn in CY25.”

I believe the thesis completely changes the equation for thinking about the AI capex cycle:

- Not just who sells the best accelerator

- But also who captures the most value across the full inference system

Nvidia may be a CPU story, too, and that’s the part many investors miss

This is the part where the analysis becomes more intriguing for Nvidia specifically.

“We also see NVDA’s (ARM-based) role in CPUs growing,” Arya said, once again focusing on Nvidia’s expanding CPU footprint as it creates a bigger AI inference portfolio.

The note talks about Nvidia’s chance to make a standalone CPU and how it can combine CPUs with its platform strategy.

That is a bigger deal than it may seem at first glance.

If Nvidia can make its ARM-based CPUs a necessary part of a tightly integrated inference stack, it could help keep customers locked in, get more people to use the platform, and give the company another way to make money besides selling GPUs.

It creates a source of tension heading into earnings season, hinging on two questions.

- Is Nvidia still the GPU king?

- How much of the AI system can Nvidia own next?

AMD and Arm look like direct beneficiaries, while Intel faces a harder read

Bank of America’s note is also valuable when it comes to a clean read-through for peers.

The analyst firm believes it sees AMD and Arm continuing to gain share in server CPUs.

More Nvidia:

- Nvidia stock gets major reality check on ‘$100B’ number

- Ray Dalio’s Bridgewater invests $253 million in major AI stock

On the flip side, Intel is under intense scrutiny as the market transforms and moves toward architectures and vendors better positioned for AI inference-era workloads.

The note says Bank of America maintains:

- Buy on Nvidia

- Buy on AMD

- Neutral on Arm (while raising its price objective to $140 from $135)

- Underperform on Intel

Arya sees “potential for greater ARM share gain toward 20-25%+ by CY30E,” which makes it harder for every company to maintain or grow its role in data center compute.

What Nvidia investors should listen for on the earnings call

Nvidia’s earnings will largely focus on revenue, guidance, and trends in AI demand.

But investors who want to get ahead should pay close attention to what management says about inference and platform breadth.

Related: Palantir faces a ‘quiet shockwave’ from a small deal with big optics

Watch for commentary on:

- Inference mix: Is demand continuing to increase, strengthening from training clusters into inference deployment at scale?

- CPU strategy: Is there any detail on CPU adoption, customer engagement, or platform integration embedded within the earnings report?

- System architecture: How is Nvidia increasing its market share in compute, memory, networking, and software together?

- Customer behavior: Are hyperscalers and enterprise buyers making choices that help Nvidia’s larger stack by optimizing for throughput, latency, and total cost of ownership?

What Nvidia needs to do is essentially reinforce the narrative that AI spending is evolving, not fading. If that happens, the bull case will expand by showing Nvidia has more ways to win as AI infrastructure matures.

The stock angle heading into earnings

The market still believes that Nvidia is still the biggest player when it comes to AI accelerators. That is fair. However, the note suggests that investors should always delve deeper.

The near-term trade heading into Wednesday, Feb. 25, is still about Nvidia’s earnings. However, in the longer term, the trade could be about whether Nvidia can convert its GPU dominance into broader control of AI inference infrastructure.

In case that occurs, the next Nvidia story may not be “GPUs versus everyone else.”

Instead, it could be that Nvidia owns most of the system, and everyone else has to fight for the scraps.

Related: Samsung’s update screen is sending wrong message after Google patch